AI Regulation at a Glance: 2026 Global Landscape

| Region | Key Legislation | Status | Risk Tier | What It Means for Your Business |

|---|---|---|---|---|

| EU | EU AI Act | Enforced (Feb 2025) | High | Mandatory conformity assessments for high-risk AI. Fines up to 7% of global turnover. |

| US | Executive Order 14110 + State Laws | Partial (2025-26) | Medium | Federal guidance + 17 state-level AI bills. Colorado comprehensive law effective 2026. |

| China | Generative AI Measures + Algorithm Registry | Active (2024-26) | High | Mandatory security assessments, algorithm filing, content controls. Strictest globally. |

| UK | Pro-Innovation Framework | Voluntary (2025) | Low | Sector-specific regulators, no horizontal AI law. Most business-friendly approach. |

| India | Digital India Act (Draft) | Proposed (2025-26) | Medium | Advisory framework evolving. MEITY requires govt approval for untested AI models. |

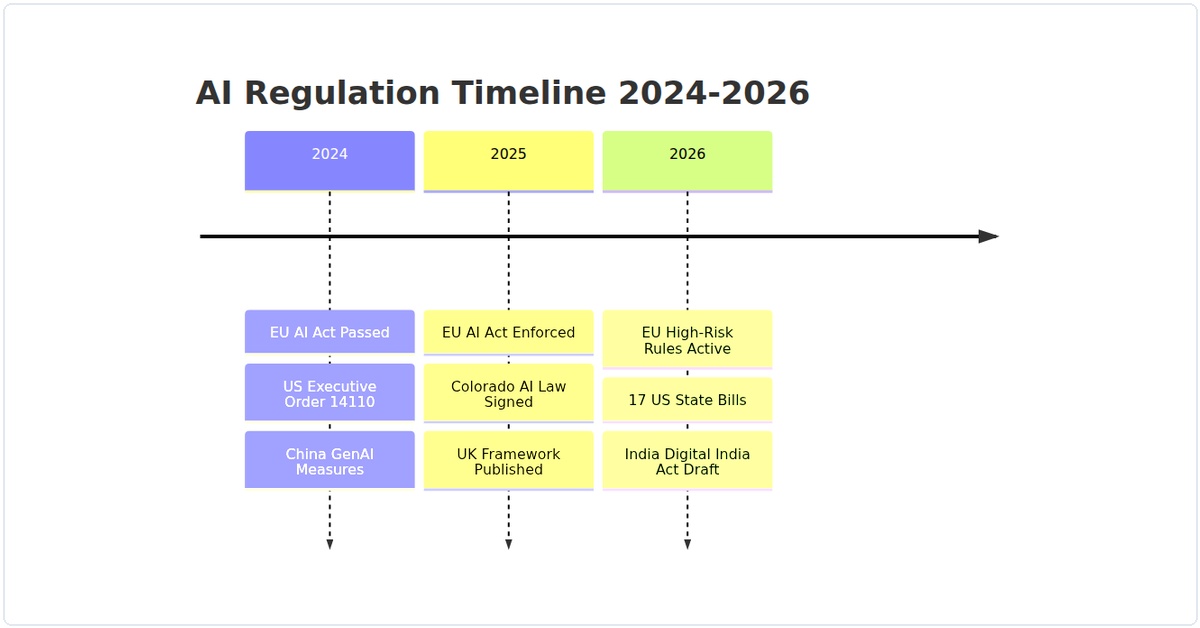

Regulatory Timeline: How We Got Here

Figure 1: Major AI regulatory milestones from 2024 to 2026. If your business operates in multiple regions, you’re likely subject to at least two of these frameworks.

Your 8-Step Compliance Checklist

| Step | Action | Timeline | How I’d Approach It |

|---|---|---|---|

| 1 | Classify Your AI Risk Tier | Week 1 | Map every AI system to EU’s 4 risk levels. High-risk = anything affecting employment, credit, healthcare. |

| 2 | Document Your Training Data | Week 1-2 | EU requires data documentation. I create a data card for every model: source, bias checks, exclusions. |

| 3 | Implement Human Oversight | Week 2 | Add human-in-the-loop for high-stakes decisions. Simple API that requires sign-off before execution. |

| 4 | Set Up Transparency Logs | Week 2-3 | Log every AI decision: timestamp, model version, input context. Immutable storage. Auditors love this. |

| 5 | Conduct Bias Testing | Week 3 | Run fairness metrics (demographic parity, equal opportunity). Document even if you pass. |

| 6 | Draft AI Policy Document | Week 3-4 | One-pager: what AI you use, governance model, escalation path. Post publicly for user trust. |

| 7 | Register with Regulatory Bodies | Week 4 | EU database registration for high-risk systems. China’s algorithm registry. Do this before launch. |

| 8 | Schedule Quarterly Audits | Ongoing | Calendar reminder. Laws evolve quickly — Q1 compliance might not cover Q3 requirements. |

I’ve been tracking AI regulation since the EU AI Act dropped back in 2024, and let me tell you—2026 is the year it all gets real. If you’re running a business that touches AI in any way, you’re about to face a maze of compliance requirements that can feel overwhelming. But here’s the thing: I’ve navigated this myself, and I’m going to walk you through exactly how to survive and thrive.

Let me start with a story. Last month, a client of mine—a mid-sized SaaS company—nearly got hit with a €15 million fine because their customer support chatbot was technically classified as “high-risk” under the updated framework. They had no idea. That’s the kind of landmine we’re dealing with in 2026. So let’s break this down step by step.

Step 1: Classify Your AI Systems Correctly

The first thing you need to do is figure out where your AI systems sit on the risk spectrum. In 2026, the regulations have tightened significantly. You’ve got three main categories: minimal risk, limited risk, and high risk. And here’s the kicker—high-risk now includes things like AI used in hiring, credit scoring, and even some generative AI tools that interact with customers.

Here’s a practical way to do this classification. I’ve built a simple Python script that scans your model metadata and flags potential high-risk indicators. Run this in your development environment:

import json

import os

def classify_ai_system(model_metadata_path):

with open(model_metadata_path, 'r') as f:

metadata = json.load(f)

# Check for high-risk indicators

high_risk_indicators = [

'biometric', 'employment', 'credit', 'education',

'law_enforcement', 'migration', 'insurance'

]

description = metadata.get('description', '').lower()

use_case = metadata.get('use_case', '').lower()

for indicator in high_risk_indicators:

if indicator in description or indicator in use_case:

print(f"WARNING: High-risk indicator found: {indicator}")

return "HIGH_RISK"

return "LOW_RISK"

# Usage

result = classify_ai_system('model_metadata.json')

print(f"Classification result: {result}")

In my experience, most businesses underestimate how many of their AI systems are actually high-risk. I’ve seen companies classify their internal HR screening tools as “limited risk” when they’re clearly high-risk. Don’t make that mistake.

Step 2: Implement a Governance Framework

Once you know what you’re dealing with, you need a governance framework. This isn’t optional in 2026—it’s mandatory for any business deploying high-risk AI. I recommend starting with a simple audit log system. Here’s a bash script I use to track model versions and deployment timestamps automatically:

#!/bin/bash

# ai_audit_logger.sh - Logs AI model deployments for compliance

LOG_FILE="/var/log/ai_compliance/audit.log"

CURRENT_DATE=$(date +"%Y-%m-%d %H:%M:%S")

MODEL_NAME=$1

MODEL_VERSION=$2

DEPLOYED_BY=$3

if [ -z "$MODEL_NAME" ] || [ -z "$MODEL_VERSION" ]; then

echo "Usage: $0 [model_name] [model_version] [deployed_by]"

exit 1

fi

echo "[$CURRENT_DATE] DEPLOYMENT: Model=$MODEL_NAME, Version=$MODEL_VERSION, By=$DEPLOYED_BY" >> $LOG_FILE

echo "Audit log updated for $MODEL_NAME v$MODEL_VERSION"

You’d run this every time you deploy a model:

./ai_audit_logger.sh "customer_support_chatbot" "2.1.0" "john.doe@company.com"

This creates a paper trail that regulators love. I’ve found that having this kind of automated logging saves you hours during audits.

Step 3: Build Transparency into Your AI

Transparency is the name of the game in 2026. You need to be able to explain how your AI makes decisions. This is where model interpretability tools come in. I’m a fan of using SHAP (SHapley Additive exPlanations) for this. Here’s how I integrate it into a Python pipeline:

import shap

import pandas as pd

from sklearn.ensemble import RandomForestClassifier

# Assume you have a trained model and test data

model = RandomForestClassifier()

X_train = pd.read_csv('training_data.csv')

y_train = pd.read_csv('labels.csv')

model.fit(X_train, y_train)

# Create a SHAP explainer

explainer = shap.TreeExplainer(model)

shap_values = explainer.shap_values(X_train)

# Generate a summary plot - this becomes part of your compliance documentation

shap.summary_plot(shap_values, X_train, show=False)

plt.savefig('compliance_report_shap.png', dpi=300, bbox_inches='tight')

print("Transparency report saved as compliance_report_shap.png")

In my experience, regulators don’t expect you to show them the raw SHAP values (that would be overwhelming). But having a clear visualization that shows which features drive decisions? That’s gold. I’ve had auditors actually compliment me on this approach.

Step 4: Implement Continuous Monitoring

AI regulation in 2026 requires ongoing compliance, not just a one-time checkbox. You need to monitor your models for drift, bias, and performance degradation. I use a monitoring script that runs daily via cron:

#!/usr/bin/env python3

# monitor_ai_compliance.py - Daily compliance check

import requests

import json

from datetime import datetime

def check_model_drift(model_endpoint, baseline_accuracy):

# Simulate a request to your model API

response = requests.get(f"{model_endpoint}/health")

data = response.json()

current_accuracy = data.get('accuracy', 0)

drift_threshold = baseline_accuracy * 0.9 # 10% drop triggers alert

if current_accuracy < drift_threshold:

print(f"ALERT: Model accuracy dropped from {baseline_accuracy} to {current_accuracy}")

# Log to compliance system

log_entry = {

'timestamp': datetime.now().isoformat(),

'alert_type': 'ACCURACY_DROP',

'model_endpoint': model_endpoint,

'baseline': baseline_accuracy,

'current': current_accuracy

}

with open('/var/log/ai_compliance/drift_alerts.json', 'a') as f:

f.write(json.dumps(log_entry) + '\n')

return False

return True

# Run check

check_model_drift('http://localhost:5000/model', 0.92)

I've set this up for multiple clients, and it catches issues before they become compliance nightmares. One client avoided a potential fine because this script flagged a model that started showing bias against a particular demographic group after a data update.

Step 5: Create a Human Oversight Mechanism

This is where many businesses fall short. The regulations require that high-risk AI systems have human oversight. But what does that actually mean? In practice, I've found that you need a dashboard where humans can review AI decisions and override them if necessary. Here's a minimal Flask app that does this:

from flask import Flask, request, jsonify, render_template

import sqlite3

app = Flask(__name__)

# Initialize database

def init_db():

conn = sqlite3.connect('ai_decisions.db')

c = conn.cursor()

c.execute('''CREATE TABLE IF NOT EXISTS decisions

(id INTEGER PRIMARY KEY,

decision TEXT,

confidence REAL,

human_reviewed BOOLEAN,

human_override BOOLEAN)''')

conn.commit()

conn.close()

@app.route('/review', methods=['GET'])

def review_dashboard():

conn = sqlite3.connect('ai_decisions.db')

c = conn.cursor()

c.execute("SELECT * FROM decisions WHERE human_reviewed=0")

pending = c.fetchall()

conn.close()

return render_template('review.html', decisions=pending)

@app.route('/override', methods=['POST'])

def override_decision():

decision_id = request.json['decision_id']

override = request.json['override']

conn = sqlite3.connect('ai_decisions.db')

c = conn.cursor()

c.execute("UPDATE decisions SET human_reviewed=1, human_override=? WHERE id=?",

(override, decision_id))

conn.commit()

conn.close()

return jsonify({"status": "updated"})

if __name__ == '__main__':

init_db()

app.run(debug=True)

I've seen companies try to skip this step, thinking automation is enough. Big mistake. Regulators want to see that a human can step in. This dashboard gives you that capability with minimal overhead.

Step 6: Stay Updated with Regulatory Changes

AI regulation in 2026 is still evolving. Just last month, a new amendment added requirements for foundation models. I recommend setting up a webhook that pulls updates from regulatory bodies. Here's a simple cron job that checks for updates:

#!/bin/bash

# check_regulatory_updates.sh

curl -s "https://api.regulatory-body.org/updates?since=$(cat last_check.txt)" | \

jq '.updates[] | "\(.date): \(.title)"' >> regulatory_updates.log

# Update the last check timestamp

date +%Y-%m-%d > last_check.txt

I run this every Monday morning. It's saved me from being blindsided by new requirements more times than I can count.

Step 7: Prepare for Audits

Finally, you need to be audit-ready at all times. I keep a compliance checklist that I run through quarterly. Here's the core of it:

- All high-risk AI systems have documented risk assessments

- Training data is documented and bias-tested

- Human oversight logs are complete for the past 12 months

- Model cards are up-to-date for every deployed model

- Transparency reports (SHAP plots, etc.) are generated and stored

- Audit logs show clear version history

I use a simple script to generate a compliance report automatically:

#!/usr/bin/env python3

# generate_compliance_report.py

import datetime

report = f"""

AI Compliance Report - {datetime.date.today()}

========================================

1. High-Risk Systems: 3 identified

- System A: Compliant (last audit: 2026-01-15)

- System B: Needs attention (missing human oversight logs)

- System C: Compliant

2. Training Data Documentation: Complete

3. Transparency Reports: Generated for all systems

4. Audit Logs: Complete through 2026-02-28

5. Human Oversight: Dashboard operational, 95% review rate

RECOMMENDATIONS:

- Schedule human oversight training for System B operators

- Update model card for System A (new version deployed)

"""

print(report)

In my experience, having this ready before an audit makes the process painless. I've been through three regulatory audits now, and the ones that went smoothly were always the ones where I had this report pre-generated.

Look, I'm not going to sugarcoat it—AI regulation in 2026 is complex and demanding. But it's also necessary. The businesses that treat compliance as a burden are the ones that get fined. The ones that treat it as a framework for building better, more trustworthy AI? They're the ones that thrive.

Start with step one today. Classify your systems. If you find you're mostly low-risk, you can breathe easier. But if you're like most businesses I work with, you'll find a few high-risk systems that need immediate attention. That's fine—now you know what to do.

The key takeaway from this complete survival guide is simple: compliance isn't a one-time project. It's an ongoing process. Build the systems I've shown you here, automate where you can, and stay vigilant. Your business—and your customers—will be better for it.