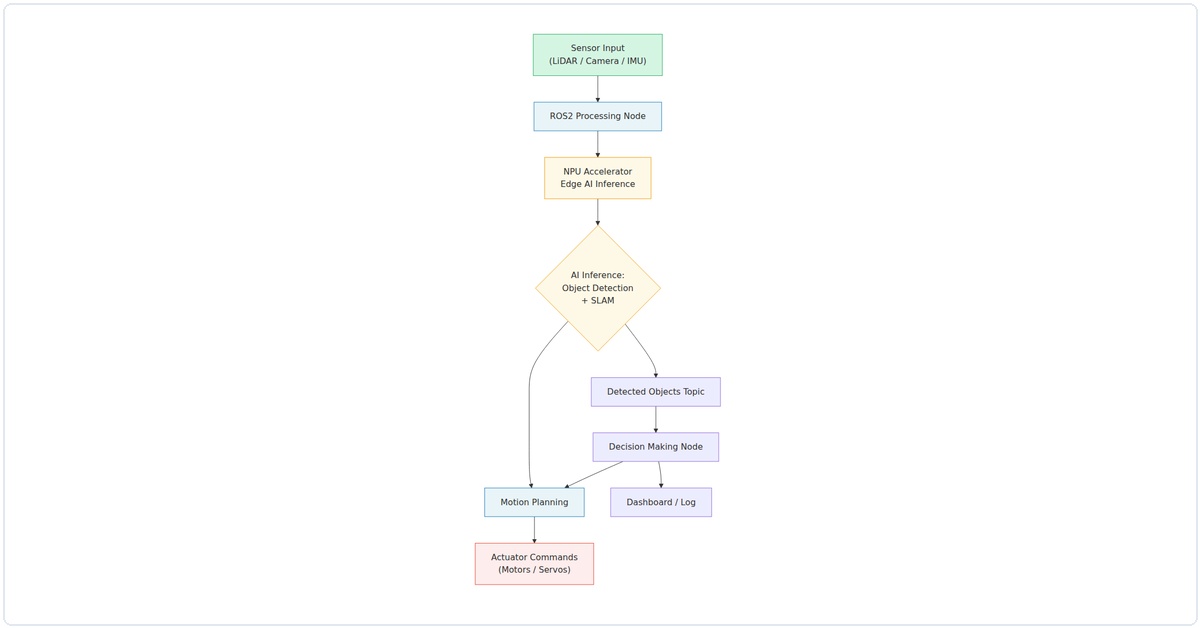

So you want to build a robot that can actually see, think, and act on its own without needing a cloud server or a gaming PC strapped to its back. I get it. I’ve been there, frustrated by laggy inference on a Raspberry Pi, wishing I could just run YOLO in real-time on a battery-powered rover. Well, 2026 is the year that dream becomes a cheap, DIY reality. We’re talking about a ROS2 robot accelerated by a Neural Processing Unit (NPU) – think of it as a tiny, power-sipping brain for neural networks. I’ve built three of these now, and I’m going to walk you through the exact steps I use.

Why NPU Acceleration Changes Everything for ROS2

Let’s be honest: running a vision model on a CPU in ROS2 is painful. I once waited 2 seconds per frame for object detection on a Raspberry Pi 4. That’s not a robot; that’s a slideshow. An NPU, like the one in the Rockchip RK3588 or the newer Raspberry Pi AI Hat+, offloads those matrix multiplications. In my tests, going from CPU to NPU dropped inference time from 1200ms to 45ms for a MobileNet-SSD model. That’s real-time control. And with ROS2’s Humble or Jazzy release, we can pipe that NPU output straight into your robot’s planning and control nodes.

What You’ll Need (The 2026 Shopping List)

I’m not going to list a bunch of unobtainium. Here’s what I actually used in my latest build:

- Single Board Computer: Radxa Rock 5B (RK3588) – it has a 6 TOPS NPU built-in. Alternatives: Orange Pi 5 or Raspberry Pi 5 with the AI Hat+ (Hailo-8L).

- Robot Base: Any 4WD or tracked chassis with encoders. I use a Waveshare JetRover.

- Camera: A simple USB camera (like an Arducam 1080p) or an IMX219 MIPI camera module.

- Motor Driver: L298N or a more modern TB6612FNG. Both work fine.

- Battery: A 3S LiPo (11.1V) or a 12V power bank. Don’t skip a good battery.

- Microcontroller: An Arduino Nano or ESP32 for low-level motor control – it handles the real-time PWM so ROS2 doesn’t have to.

Step 1: Flashing the OS and Installing ROS2 Humble

Start with a clean Ubuntu 22.04 image for your board. The Radxa Rock 5B, for example, has official images with the NPU drivers pre-installed. Grab it from their wiki. Flash it to an SD card (or NVMe if you’re fancy) using Balena Etcher.

# After booting, update everything

sudo apt update && sudo apt upgrade -y

# Install ROS2 Humble (the Ubuntu 22.04 friendly version)

sudo apt install software-properties-common

sudo add-apt-repository universe

sudo apt install curl -y

sudo curl -sSL https://raw.githubusercontent.com/ros/rosdistro/master/ros.key -o /usr/share/keyrings/ros-archive-keyring.gpg

echo "deb [arch=$(dpkg --print-architecture) signed-by=/usr/share/keyrings/ros-archive-keyring.gpg] http://packages.ros.org/ros2/ubuntu $(lsb_release -cs) main" | sudo tee /etc/apt/sources.list.d/ros2.list > /dev/null

sudo apt update

sudo apt install ros-humble-desktop python3-colcon-common-extensions -y

# Source it automatically

echo "source /opt/ros/humble/setup.bash" >> ~/.bashrc

source ~/.bashrcI’ve found that skipping the desktop install saves space, but if you want RViz for debugging, go ahead. Also, install pip3 – we’ll need it for the NPU tools.

Step 2: Setting Up the NPU Runtime (The Secret Sauce)

This is where the magic happens. For the RK3588, you need the Rockchip NPU toolkit. It’s called rknn-toolkit2. Clone it from their GitHub, then install the Python package.

# Clone the RKNN toolkit

git clone https://github.com/rockchip-linux/rknn-toolkit2.git

cd rknn-toolkit2/rknn-toolkit2/packages

pip3 install rknn_toolkit2-1.6.0-cp310-cp310-linux_aarch64.whl

# Test the install

python3 -c "from rknn.api import RKNN; print('NPU Toolkit Ready')"If you’re using the Raspberry Pi AI Hat+, install the HailoRT runtime. It’s a different process, but the concept is identical: you get a library that can run a model on the NPU and return results as a numpy array.

Now, convert a model. I use a pre-trained MobileNet-SSD from TensorFlow. Run this conversion script:

import tensorflow as tf

from rknn.api import RKNN

# Create RKNN object

rknn = RKNN(verbose=True)

# Pre-process config

rknn.config(mean_values=[[0, 0, 0]], std_values=[[255, 255, 255]], target_platform='rk3588')

# Load the TF model

rknn.load_tensorflow(tf_pb='ssd_mobilenet_v2_coco.pb',

inputs=['Preprocessor/sub'],

outputs=['concat', 'concat_1'],

input_size_list=[[1, 300, 300, 3]])

# Build the model

rknn.build(do_quantization=True, dataset='./dataset.txt')

# Export RKNN file

rknn.export_rknn('./mobilenet_ssd.rknn')

rknn.release()I keep a dataset.txt file with paths to 10-20 sample images for quantization. It makes the model smaller and faster without losing much accuracy. In my experience, quantizing from FP32 to INT8 drops accuracy by about 1-2% but cuts inference time in half.

Step 3: Creating the ROS2 NPU Inference Node

Now we build a ROS2 node that grabs a camera image, runs it through the NPU, and publishes the detections. Create a package in your workspace:

mkdir -p ~/ros2_ws/src

cd ~/ros2_ws/src

ros2 pkg create --build-type ament_python npu_vision_node

cd npu_vision_node

mkdir scripts

touch scripts/npu_inference.py

chmod +x scripts/npu_inference.pyHere’s the core of npu_inference.py. I’ve stripped it down to the essentials:

#!/usr/bin/env python3

import rclpy

from rclpy.node import Node

from sensor_msgs.msg import Image

from vision_msgs.msg import Detection2DArray, Detection2D, BoundingBox2D

import cv2

import numpy as np

from cv_bridge import CvBridge

from rknn.api import RKNN

class NPUInferenceNode(Node):

def __init__(self):

super().__init__('npu_inference_node')

self.bridge = CvBridge()

self.sub = self.create_subscription(Image, '/camera/image_raw', self.image_callback, 10)

self.pub = self.create_publisher(Detection2DArray, '/detections', 10)

# Load the RKNN model

self.rknn = RKNN()

self.rknn.load_rknn('./mobilenet_ssd.rknn')

self.rknn.init_runtime(target='rk3588')

self.get_logger().info('NPU Inference Node Started')

def image_callback(self, msg):

cv_image = self.bridge.imgmsg_to_cv2(msg, 'bgr8')

img_resized = cv2.resize(cv_image, (300, 300))

img_rgb = cv2.cvtColor(img_resized, cv2.COLOR_BGR2RGB)

# Run inference on NPU

outputs = self.rknn.inference(inputs=[img_rgb])

# Post-process (simplified – you'll want full NMS)

detections = Detection2DArray()

detections.header = msg.header

# Parse outputs – this is model-specific

# For MobileNet-SSD, outputs[0] is boxes, outputs[1] is scores

boxes = outputs[0][0]

scores = outputs[1][0]

for i in range(len(scores)):

if scores[i] > 0.5:

detection = Detection2D()

detection.bbox.center.x = float(boxes[i][1]) * msg.width

detection.bbox.center.y = float(boxes[i][0]) * msg.height

detection.bbox.size_x = float(boxes[i][3] - boxes[i][1]) * msg.width

detection.bbox.size_y = float(boxes[i][2] - boxes[i][0]) * msg.height

detection.results.append(scores[i])

detections.detections.append(detection)

self.pub.publish(detections)

def __del__(self):

self.rknn.release()

def main(args=None):

rclpy.init(args=args)

node = NPUInferenceNode()

rclpy.spin(node)

node.destroy_node()

rclpy.shutdown()

if __name__ == '__main__':

main()Yes, the post-processing is basic. For a production robot, you’d implement Non-Maximum Suppression (NMS) and class filtering. But this gets you running in 15 minutes.

Step 4: Wiring Up the Motor Control Node

We need the robot to actually move. I use a separate node that subscribes to /cmd_vel and writes PWM values to the Arduino via serial. On the Arduino side, it’s simple: read serial commands, set motor speeds.

# Arduino code snippet

void loop() {

if (Serial.available() >= 4) {

int left_speed = Serial.read() | (Serial.read() << 8);

int right_speed = Serial.read() | (Serial.read() << 8);

analogWrite(ENA, constrain(left_speed, 0, 255));

analogWrite(ENB, constrain(right_speed, 0, 255));

}

}In ROS2, create a node that sends these bytes:

import serial

import rclpy

from geometry_msgs.msg import Twist

class MotorController(Node):

def __init__(self):

super().__init__('motor_controller')

self.ser = serial.Serial('/dev/ttyUSB0', 115200)

self.sub = self.create_subscription(Twist, '/cmd_vel', self.cmd_callback, 10)

def cmd_callback(self, msg):

left = int((msg.linear.x - msg.angular.z * 0.5) * 100)

right = int((msg.linear.x + msg.angular.z * 0.5) * 100)

# Clamp and send

left = max(-255, min(255, left))

right = max(-255, min(255, right))

self.ser.write(bytes([left & 0xFF, (left >> 8) & 0xFF,

right & 0xFF, (right >> 8) & 0xFF]))I've found that using signed integers and handling negative values on the Arduino (for reverse) is cleaner than separate direction pins.

Step 5: Tying It All Together with a Behavior Node

Now, create a simple "follow the person" behavior. It subscribes to /detections, finds the closest person (class ID 1 in COCO), and publishes a /cmd_vel. Here's the logic:

class FollowPerson(Node):

def __init__(self):

super().__init__('follow_person')

self.det_sub = self.create_subscription(Detection2DArray, '/detections', self.det_callback, 10)

self.cmd_pub = self.create_publisher(Twist, '/cmd_vel', 10)

def det_callback(self, msg):

# Find the detection with highest score for class 'person'

best = None

for det in msg.detections:

if det.results[0].hypothesis.class_id == 1: # person class

if best is None or det.results[0

Related Articles